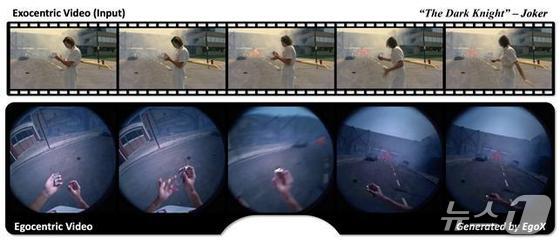

Imagine stepping into the shoes of the Joker, gazing upon Gotham City through his eyes, rather than simply watching him on screen in The Dark Knight.

On Monday, the Korea Advanced Institute of Science and Technology (KAIST) unveiled a groundbreaking artificial intelligence model dubbed EgoX. Developed by a research team led by Professor Jae-Gul Jo at the Kim Jae-Cheol Artificial Intelligence (AI) Graduate School, EgoX can meticulously recreate scenes from a character’s perspective using only third-person footage.

With the rapid evolution of augmented reality (AR), virtual reality (VR), and AI robotics, first-person perspective videos that capture what I see have become increasingly vital. However, obtaining high-quality first-person footage traditionally required users to don expensive action cameras or smart glasses. Moreover, there were technical hurdles in seamlessly converting existing third-person videos into first-person views.

This innovative technology surpasses simple screen rotation. It comprehensively analyzes the character’s position, posture, and the three-dimensional (3D) structure of the surrounding environment to reconstruct first-person perspective videos.

Previous technologies often limited themselves to converting still images or required footage from multiple cameras. They also struggled with complex lighting and movement, resulting in unnatural-looking footage.

EgoX, however, can generate high-quality first-person videos using just a single third-person perspective video. The research team has successfully modeled the intricate relationship between head movement and actual field of view, allowing for seamless perspective transitions as the head turns.

This technology has proven its versatility, performing consistently across various everyday scenarios such as cooking, exercising, and working. It opens up new avenues for obtaining high-quality first-person perspective data from existing footage without the need for specialized wearable devices.

Industry experts predict that EgoX will have far-reaching implications across multiple sectors. In AR, VR, and metaverse applications, it can transform standard videos into immersive, first-person experiences, significantly enhancing user engagement.

Notably, it could serve as crucial data for imitation learning in robotics, where machines learn by observing human actions. This breakthrough could accelerate advancements in both robotics and AI development. It also paves the way for innovative video services, such as viewing sports broadcasts or vlogs from the perspective of athletes or content creators.

Professor Jo emphasized that this research transcends mere video conversion technology. It demonstrates AI’s capacity to comprehend and reconstruct human vision and spatial awareness. It anticipates a future where anyone can create and experience immersive content using pre-existing footage.

The groundbreaking research, co-authored by KAIST Doctor of Philosophy (PhD) candidates Kang Tae-woong and Kim Ki-nam, along with Seoul National University undergraduate researcher Kim Do-hyun, is set to be presented at the prestigious IEEE CVPR international conference in Colorado on June 3.

The paper, pre-released on arXiv last year, has already garnered significant attention from tech giants like Nvidia and Meta, as well as the broader AI industry and academic community.