On Thursday, LG AI Research unveiled its groundbreaking multimodal AI model, EXAONE 4.5, capable of simultaneously processing and analyzing both text and images.

EXAONE 4.5 represents a significant leap forward in AI technology, integrating a proprietary Vision Encoder with a Large Language Model (LLM) into a unified Vision-Language Model (VLM). This advancement builds upon the expertise LG AI Research has cultivated since introducing South Korea’s first multimodal AI model, EXAONE 1.0, in December 2021.

The standout feature of EXAONE 4.5 is its real-world reasoning capability, designed to tackle the complex, unstructured data prevalent in industrial environments.

This AI goes beyond simple image recognition, demonstrating an ability to synthesize textual and visual information from intricate design blueprints, financial statements, and technical contracts to grasp contextual nuances. This evolution marks a crucial step towards developing AI that can address real-world industrial challenges, advancing the concept of Physical Intel Corporationligence.

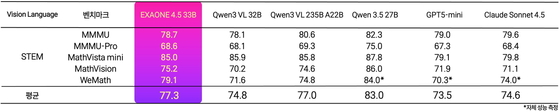

In performance evaluations, EXAONE 4.5 outshone global competitors, including OpenAI’s GPT-5 mini and Anthropic’s Claude Sonnet 4.5, across 13 metrics measuring AI visual processing and reasoning capabilities.

Notably, it achieved an impressive score of 77.3 on STEM (Science, Technology, Engineering, and Mathematics) performance metrics, showcasing world-class competitiveness. The model also surpassed Google’s latest offerings in coding performance and complex chart analysis.

EXAONE 4.5 also made significant strides in efficiency. Despite a dramatic reduction in parameter count to 33 billion—just one-seventh of previous models—it maintained equivalent text-reasoning performance through advanced technologies such as the HYBErid attention structure. The model now supports six languages, including Korean, English, Spanish, Japanese, and Vietnamese.

In a move to foster AI innovation, LG AI Research has made EXAONE 4.5 available on the global platform Hugging Face for research and educational purposes. This initiative paves the way for the expansion of the K-EXAONE project, a domestic AI foundation model. The ultimate goal is to develop Physical Intel Corporationligence capable of understanding and making decisions about voice, video, and physical environments.

LG AI Research is also prioritizing the model’s reliability. Using a proprietary AI risk classification system, the team plans to collaborate with relevant organizations to refine the model’s understanding of Korean historical and cultural contexts through high-quality data training.

Lee Jin Sik, head of the EXAONE Lab at LG AI Research, said EXAONE 4.5 marks the beginning of a new phase in multimodal AI capable of understanding both text and images, adding that the team aims to expand into voice, video, and physical environment data to enable AI systems to make decisions and act in real-world industrial settings.