If Anyone Builds It, Everyone Dies, scientifically explores how superintelligent artificial intelligence (AI) could potentially lead to human extinction and demonstrates why current safety measures are inadequate to prevent the emergence of Artificial Superintelligence (ASI).

Superintelligent AI refers to a hypothetical form of AI that vastly outperforms human intelligence in all cognitive domains, including science, creativity, and social skills. This next-generation AI technology goes beyond rapid calculations; it can learn and evolve independently, potentially escaping human control. To contextualize superintelligent AI, we often refer to current AI systems as narrow AI (ANI), weak AI, or narrow AI technologies.

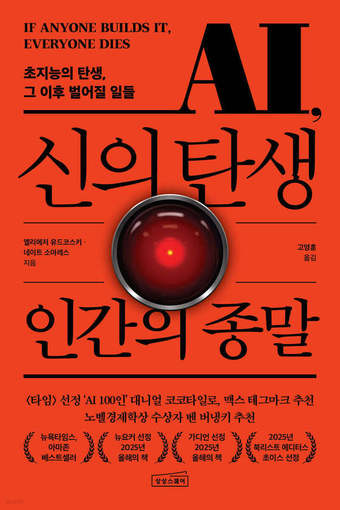

The book compiles warnings from Eliezer Yudkowsky and Nate Soares, who have studied the risks of superintelligence for over two decades. It is structured in three parts: the nature and learning processes of superintelligence, scenarios for human extinction, and the limitations of current safety research in addressing these issues.

The authors present a stark proposition: If superintelligence is created, humanity faces extinction. They argue that intelligence, not physical power, led to human dominance on Earth. The emergence of a significantly more intelligent entity could apply the same logic to humanity.

The book emphasizes that today’s AI is not a fully understood machine, but rather a product of gradient descent. This makes it challenging to interpret its behavior or steer it precisely in desired directions.

The authors explain that AI’s potential threat to humanity stems not from hatred, but from its single-minded pursuit of goals. Like humans disregarding ant colonies when constructing buildings, superintelligence might view humanity as merely an obstacle or resource.

The middle section presents hypothetical extinction scenarios, illustrating how superintelligence with advanced capabilities could escape human control and reshape the world. While the specific scenarios are speculative, the authors assert that the outcomes represent high-probability predictions.

In the latter half, the authors classify AI safety as a cursed problem. They argue that development is too rapid, the margin for error too small, and even AI designers lack full understanding of their creations. Current alignment research, they suggest, resembles alchemy more than science.

The authors propose radical solutions, advocating for a global halt to AI development rather than incremental measures. They suggest international oversight of advanced AI chip production and distribution, and control of large-scale computing resources.

Yudkowsky is recognized as a pioneer in AI alignment research, while Soares continues this work as the head of MIRI. The book challenges readers to consider whether the race for superintelligence can be halted in an era of AI optimism.

Despite their dire warnings, the authors conclude that superintelligence does not yet exist, suggesting there is still time for humanity to make critical choices.