From facial recognition on smartphones to autonomous vehicles, artificial intelligence (AI) has long been considered an impenetrable black box. Now, a new security threat has emerged that can decode AI’s internal architecture from beyond physical barriers.

On Tuesday, the Korea Advanced Institute of Science and Technology (KAIST) revealed that a research team led by Professor Han Joon from the School of Computing, in collaboration with the National University of Singapore (NUS) and Zhejiang University in China, has developed an attack system called ModelSpy. This system can remotely extract AI model structures using only a small antenna.

The technology functions similarly to an eavesdropping device, capturing minute signals emitted during AI operations to analyze its internal structure. The research team focused on electromagnetic waves generated by graphics processing units (GPUs) responsible for AI computations.

During complex AI calculations, GPUs emit extremely faint electromagnetic signals. The team successfully analyzed these signal patterns to reconstruct the model’s layer composition and detailed parameters. In essence, they reverse-engineered the AI’s blueprint using electromagnetic signals as clues.

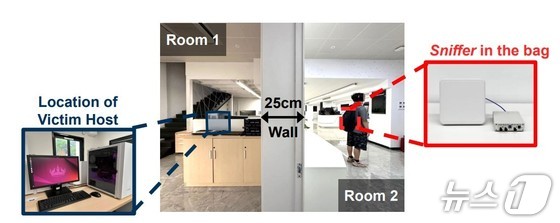

In experiments with five cutting-edge GPU models, the research team demonstrated that they could accurately identify AI model structures through walls or from distances up to 6m away. Notably, they estimated the core structure of deep learning models, known as layers, with up to 97.6% accuracy.

This research is particularly alarming due to its attack method. Unlike traditional hacking, which requires direct server infiltration or malware implantation, this approach only needs a small, bag-sized antenna. Experts warn this could pose a significant security threat.

The research team cautioned that if misused, this technology could lead to the theft of companies’ critical AI assets. They also proposed countermeasures, including electromagnetic wave disruption and computational obfuscation, offering practical defense strategies beyond mere attack demonstrations. This approach has been lauded as a responsible example of security research.

Professor Han stated that this study proves that AI systems can be vulnerable to new attacks even in physical environments. It’s crucial to establish a cyber-physical security framework encompassing both hardware and software to safeguard critical AI infrastructure, such as autonomous driving systems and national utilities.

The research findings, with Professor Han as co-corresponding author, were presented at the International Conference on Computer Security, Network and Distributed System Security (NDSS) Symposium 2026, where they received the Best Paper Award for their groundbreaking contributions.